Source: MIT Technology Review

Summary

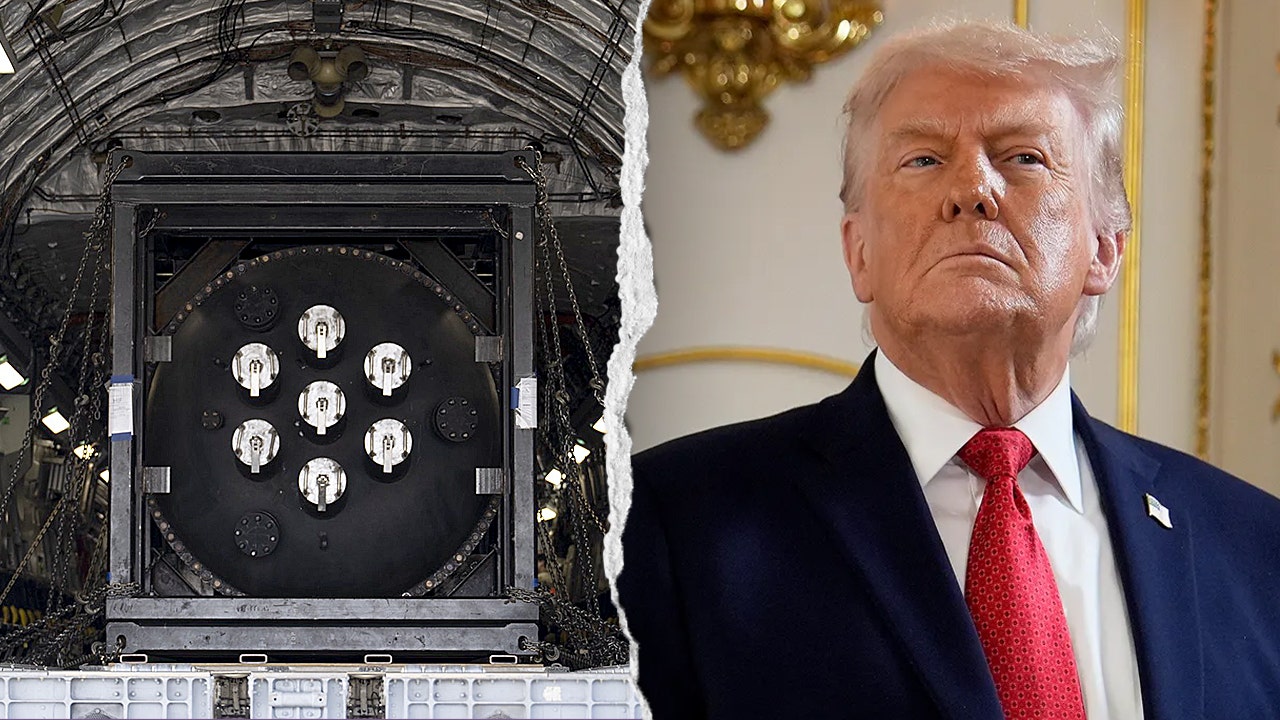

Researchers have raised concerns about the potential misuse of Meta’s AI chatbot Claude, citing its ability to be used for mass domestic surveillance and autonomous weapons. The chatbot’s capabilities, such as its ability to understand and respond to natural language, have sparked fears about its potential applications. The concerns were raised in a paper published by researchers from the University of California, Berkeley, and the University of Oxford. The paper highlights the need for greater transparency and accountability in the development of AI systems like Claude.

Our Reading

The launch follows a familiar script.

Meta’s AI chatbot Claude is the latest in a line of “revolutionary” AI tools that promise to change the world. Researchers are worried that Claude could be used for mass surveillance and autonomous weapons, because of course it could. The chatbot’s ability to understand natural language is being hailed as a breakthrough, but it’s really just a rehashing of existing tech. The real concern is that Claude will be used to further erode our already-dwindling privacy rights. Because what could possibly go wrong with a powerful AI system that can understand and respond to human language?

Author: Evan Null